http://personalpages.manchester.ac.uk/staff/m.dodge/atlas/Atlas_with_cover_high.pdf

As published today (Oct. 22nd 2008) on the nettime mailinglist, the Atlas of Cyberspace, originally published in 2001 is now fully available for download & licensed under creative commons.

<< preface

it is also meant to be an additional resource of information and recommended reading for my students of the prehystories of new media class that i teach at the school of the art institute of chicago in fall 2008.

the focus is on the time period from the beginning of the 20th century up to today.

>> search this blog

2008-10-23

>> "The Atlas of Cyberspace", Martin Dodge & Rob Kitchin, 2001

Gepostet von

Nina Wenhart ...

hh:mm

1:56 AM

0

replies

tags -

cyberspace,

publications

![]()

Bookmark this post:blogger tutorials

Social Bookmarking Blogger Widget |

2008-10-22

>> "the golem", 1920

Gepostet von

Nina Wenhart ...

hh:mm

6:33 AM

0

replies

tags -

history,

video

![]()

Bookmark this post:blogger tutorials

Social Bookmarking Blogger Widget |

2008-10-21

>> next 5 minutes reader

http://www.debalie.nl/mmbase/attachments/193923/n5m4_reader.pdf

from: http://www.next5minutes.org/about.jsp

"What is Next 5 Minutes?

Next 5 Minutes is a festival that brings together media, art and politics.

Next 5 Minutes revolves around the notion of tactical media, the fusion of art, politics and media. The festival is organised irregularly, when the urgency is felt to bring a new edition of the festival together.

How did this particular edition of the festival come about?

The fourth edition of the Next 5 Minutes festival is the result of a collaborative effort of a variety of organisations, initiatives and individuals dispersed world-wide. The program and content of the festival is prepared through a series of Tactical Media Labs (TMLs) organised locally in different cities around the globe. This series of Tactical Media Labs started on September 11, 2002 in Amsterdam and they continue internationally right up to the festival in September. TMLs have been organised in: Amsterdam, Sydney, Cluj, Barcelona, Delhi, New York, Singapore, Birmingham, Nova Scotia, Berlin, Chicago, Portsmouth, Sao Paulo, Moscow, Dubrovnik, and Zanzibar. The results of the various TMLs are published in a web journal, at: http://www.n5m4.org

What are the main themes of N5M4?

The program of Next 5 Minutes 4 is structured along four core thematic threads, bringing together a host of projects and debates. These four thematic threads are: "The Reappearing of the Public" deals with the elusiveness of the public that tactical media necessarily needs to interface with, and considers new strategies for engaging with or redefining 'the public'. "Deep Local (Growing Roots for the Global Village)", which explores the ambiguities of connecting essentially translocal media cultures with local contexts. "The Tactics of Appropriation" questions who is appropriating whom? Corporate, state, or terrorist actors all seem to have become effective media tacticians, is the battle for the screen therefore lost? "The Tactical and the Technical" finally questions the deeply political nature of (media-)technology, and the role that the development of new media tools plays in defining, enabling and constraining its tactical use.

Formats

The festival explores a variety of forms and formats, from low-tech to high-tech, from seminars and debates to performances and urban interventions, screenings, installations as well as sound projects and live media, a pitching session, a tool builders fair and open unmoderated spaces. Defining for tactical media is not the medium itself, but the attitude towards media."

Gepostet von

Nina Wenhart ...

hh:mm

6:46 PM

0

replies

tags -

conference,

publications,

tactical media

![]()

Bookmark this post:blogger tutorials

Social Bookmarking Blogger Widget |

2008-10-03

>> Jasia Reichardt, "Cybernetic Serendipity", audiofile from conference, 2005

go: http://radio.sztaki.hu/node/get.php/666pr588

from the conference "Parliaments of Arts 2005", Vienna

Gepostet von

Nina Wenhart ...

hh:mm

10:22 PM

0

replies

tags -

audiofiles,

cybernetic art

![]()

Bookmark this post:blogger tutorials

Social Bookmarking Blogger Widget |

>> Saul Albert, "Artware", 1999

from: http://twenteenthcentury.com/saul/artware.htm

Saul Albert, 08/9, Published in Mute Issue 14

Artware

"The idea becomes a machine that makes the art" Sol Lewitt, Artforum, 1967.

;"> The rise of Conceptual Art, which occurred around the time that Sol Lewitt wrote "Paragraphs on Conceptual Art", coincided neatly with the birth of hacker culture--between the transformation of MIT's Tech Model Railway Club into the AI lab in 1963-4, and 1969, the year that ARPANET was set up. Although it is not possible to chart the links between these events in a linear fashion, it is interesting to see their more recent convergence. Artist-programmers have been hunched over computer screens in bedroom-studios (and now, trendy new media labs), bearing much resemblance to the stereotypical teenage hacker of the 80s. Many of the theories in Lewitt's text draw a strong analogy between the Conceptualist use of the 'idea-becoming-machine' and contemporary uses of software in art.

It is one of the defining characteristics of computer programmes that they cross the boundaries between user and author. The move towards software engineering from a more commonplace 'click here' approach to computer based art can be seen as an attempt by artists to engage the user as a co-author of their experience. This relates clearly to the conceptualist strategy of relying on the viewer to make (or imagine the making of) the artwork:

"To work with a plan that is pre-set is one way of avoiding subjectivity. (...) The plan would design the work".

Lewitt saw the execution of the conceptual plan as a tactic for avoiding the 'expressive', or self-consciously authored art object. The conceptualists developed the form of 'instructions for the making of art'. This represented a shift in authorial hegemony from a centralised model (centred in the body of the artist), to a distributed one. However, although by following the instructions anyone could make the artwork, the instructions themselves retained the authorial privilege. The 'original' idea remained sacrosanct. This highlights a contradiction in the stated intention to de-subjectify the artwork, and final result in which the user/viewer is still subjected to the didactic stance of the artist. (1)

In a recent interview with Tilman Baumgaertel, John F Simon Jr. describes the workings of his homemade paint programme:

"Using the artwork to create more artwork... when you run the programme you are demonstrating the writing of the programme."

The use of the programme generates artwork, and Simon invests equal artistic value in the programme itself. It seems that Simon's programmed artwork retains Lewitt's contradiction; on the one hand enabling the user to direct the making of artworks, but at the same time preventing them from directing the way in which the artworks are made, a fact he acknowledges in his interview:

"I have to say that I am not very interested in defining my work through the actions of other people".

This limitation on authorship can be attributed to other factors besides Simon's Conceptualist artistic heritage. The limitations placed on the user of the artwork are framed by the artist's limited authorial privilege in writing and running the programme. For example, the programme is written in a programming language that has a given structure and syntax to which the artist must adhere in order for it to function. Aside from this and countless other dependencies, the artwork/software runs within an Operating System that has a given visual feel, and a given functional structure, not to mention the political, cultural, economic and legal intricacies of IT infrastructure. Of course all of these limitations have their analogous limitations in the physical world of canvas, plaster, dealer and gallery, but it is the nature of these limitations which make the programmer/artist a distinctive figure.

The structures that surround the work of the Artist/Programmer can be examined by looking at the various ways in which artists approach software. Without pretence to exhaustive analysis, I will present the work of a few artists who represent diverse approaches to the artistic use of software.

Keith Tyson wrote his Art Machine programme using Prolog, a language well suited to AI applications. He feeds the programme with a variety of sculptural ingredients, the Art Machine then translates these into instructions on how to make a sculpture. Tyson makes the sculptures, exhibits them and sells them on the art market. The relationship Tyson has with this programme is mutually controlling. He programmes the Art Machine with possible sculptural ingredients and a framework for configuring them, then the art machine programmes him with Conceptualist style 'instructions' for making artwork. The sculptural product of the process can then be introduced to the art market that has its own means of distributing, evaluating and promoting sculptural forms (2). Tyson subjects himself to programming, much in the same way as John F. Simon does when he--rather than another subjected user--is running his homemade software. The products of these interactions are manifestations of the artist's ideas, displayed in a compatible format (sculpture and drawing) for assimilation by the art market. The viewers are placed in an art gallery context, and have no direct interaction with the art machine other than by seeking its rationale through its many bizarre products. The viewers are invited to examine how Keith's relationship with the Art Machine effects his status as the artist, and theirs as the viewer (3).

Paul Garrin's name.space (NS) project is realised and distributed in an entirely different arena. NS is an alternative autonomous Domain Name System with which Garrin hopes to establish a 'Permanent Autonomous Net'. He speaks about the existing Domain Name System being a dominating and semantically territorial regime controlled nefariously by ex CIA officials.

"In the meme of the 'DOMAIN NAME SYSTEM' the message is control, 'DOMINATION', 'TERRITORY'." (Paul Garrin interviewed by Pit Shultz, Nettime, 13th June 1997).

Whether or not this is the case, Garrin's creative use of software is masterful. With only a couple of servers he has created an alternative domain name system. His system does not rely on geographical referents such as .uk, .au, or .jp. Name Space is open to user directed suggestion as to how the name syntax is defined. http://timothy.leary is one example of an NS address. The art world is sidelined here. Garrin is playing to a potentially mass market, and for potentially high financial stakes. Other companies with similar ideas such as Alternic started up at around the same time as NS, so he even had commercial competition. His right to incorporate his system into the mainstream DNS is being contested in the courts.

This artistic use of software attempts to throw off some of the strictures of the technology that Internet users are subjected to. Both the bits of software Garrin uses--Apache and WebStar--are available free (or as demo-ware) over the Internet, and are not designed with the creation of an independent Domain Name Servers in mind, the end to which Garrin cunningly exploits their functionalities. His idea is to facilitate a usage of the Internet that is less mediated by commercial and governmental interests, allowing a user's Internet presence to be nominally self-directed. By playing with the server software that makes up the infrastructure of the Net, he is attempting to bolster the authorial rights of its inhabitants. In this struggle for (signified) territory, Garrin takes his cue from Situationist tactics of détournement, using the technology of the dominators to undermine and subvert their aims (4).

The art collective Mongrel has also taken this Situationist approach to software, by hacking into a popular commercial image editing application and giving it a political charge. The user is invited to edit their heritage using this software tool, and with commands such as 'purge' and 'invert', to alter the image of a skin-masked face in a racially charged visual language. This method of software intervention derives from a hacking tradition of game patching; writing software agents or altering image resources to change the look or function of pre-existing software.

Mongrel breaks the smooth simulated surface of the programme and gives the user a look into the politically dubious and racialized norms of routinely used software. The cropped language of the 'commands' ("Purge", "Execute") reveals the software's own military heritage, and the shocking imagery combined with the 'user-friendly' interface is very unsettling. By altering the programme in these ways, Mongrel shows how these mainstream programmes direct what is produced using them, and even limit the imagination and capabilities of users.

"An emphasis on specific objects gave way to an investigation of instructions as an art form and the role of the artist as communicator to the public gave way to the artist as instigator of public events. "John C. Ippolito on artistic developments since the 60's, Nettime - 04 Sept 1998

In early 1999 the panel of judges for the Prix Ars Electronica chose Linux, the Open Source Operating System (5) as the winner in the .net category. If just the name Linux sends you into a boredom induced coma, skip the next paragraph with which I will try to outline some of the reasons Linux won. The legalities at the basis of Linux's usage are dealt with by licence under the GPL (General Public Licence) which free it from the grasp of commercial software corporations. The central ethos of its development policy has been to make available all the information, tools and code necessary for users to alter the program; the Operating System does not constitute a visual and functional given for any artwork/software made or shown using Linux. The ability of Linux to gather a community of user/authors was acknowledged as a contributing factor to it winning the Golden Nica. The distribution, evaluation and promotion of Linux is done within this Open Source community, ensuring its continuity and growth.

It is this combination of features which allowed the Linux development community to grow so large that Linux's efficiency, quality, and speed of reaction to user demand far outclasses the commercial competition. As a result of this, and the tumult of media hype now surrounding Linux, that it has become the only real challenger to Microsoft's market domination.

As to how the Judges came to choose Linux for the Ars Electronica prize, Lewitt's words are resonant

"The idea becomes a machine that makes the art"

When Linux is examined using artistic criteria, it reveals a very high degree of critical rigour in its execution and conception (this rigorous approach was necessary to the legality of the project). Most of all, Linux is a beautifully clear realisation of the idea of Open Source. By awarding the Golden Nica to Linux, the judges were revealing the connection between Lewitt's Conceptualism and the hacker/hobbyist dreams of the last forty years.

It is the idea of Open Source that became a machine (Linux) which both constitutes and facilitates the artwork.

Saul Albert

08/99

Published in Mute Issue 14

ISSN 1356-7748

Notes

- < I am not criticising Lewitt's work with this observation, I am simply pointing out a link between his work, and the issues surrounding the work of artist's using software.

- < Keith has drawn up Jackson structure diagrams (family tree-like hierarchical arrangements) of the way money flows through the art market. His use of the art-machine to interface with these money flows is extremely well calculated.

- < Keith's under used "Replicators" project for adaweb works along similar lines and is worth a try at: http://adaweb.walkerart.org/influx/tyson/

- < Retired artist and "aspiring revolutionary" Heath Bunting relates to this territorial struggle in a recent interview at London's Expo Destructo. Although he has shifted ground to biotech, his intentions and methods are very similar to Garrin's.

- < For those of you who don't know what Open Source is try Eric S. Raymond "The Cathedral and the Bazaar", but for now, the characteristics listed below may give you some idea.

Bibliography

- Sol Lewitt, Paragraphs on Conceptual Art, Artforum vol 5 no. 10 summer 1967 pp 79-83

- To: nettime-l@Desk.nl Subject: (nettime) more on Bochner/jodi/formalism From: "Jon C. Ippolito" (JIppolito@guggenheim.org) Date: Fri, 22 May 1998 11:39:51 -0400

- To: nettime-l@bbs.thing.net, kunstvereinn@odn.de Subject: (nettime) Interview with John F. Simon From: Tilman Baumgaertel (tilman_baumgaertel@csi.com) Date: Mon, 19 Jul 1999 16:47:04 +0200

- To: nettime-l@Desk.nl Subject: (nettime) Pit Schultz Interview with Paul Garrin From: (mf@mediafilter.org) (MediaFilter) Date: Fri,13 Jun 1997 03:54:51 -0400

Gepostet von

Nina Wenhart ...

hh:mm

9:45 PM

0

replies

tags -

code art,

text

![]()

Bookmark this post:blogger tutorials

Social Bookmarking Blogger Widget |

>> Inke Arns, "Read_me, run_me, execute_me", 2004

from: http://www.medienkunstnetz.de/themes/generative-tools/read_me/

http://www.medienkunstnetz.de/themes/generative-tools/read_me/

«Software is mind control. Come and get some.» [1]

'Generative art' has become a fashionable term over the last two years and can now be found in such varied contexts as academic discourse, media arts festivals, architects’ offices, and design conferences. It is often used in such a way that it cannot be distinguished from the term 'software art', if not as a direct synonym for it. Generative art and software art are obviously related terms–but exactly what their connection is often remains a mystery. This essay attempts to shed some light on the relationship between generative art and software art.

Generative art ≠ Software art

According to Philip Galanter (2003), generative art refers to "any art practice where the artist uses a system, such as a set of natural language rules, a computer program, a machine, or other procedural intervention, which is set into motion with some degree of autonomy contributing to or resulting in a completed work of art." [2] Thus, generative art refers to processes that run autonomously, or in a self-organizing way, according to instructions and rules pre-programd by the artist. Depending on the technological context in which the process unfolds, the result is unpredictable and thus less the product of individual intention or authorship than the product of the given working conditions. [3] This definition of generative art (as well as some other definitions) is, as Philip Galanter writes, an 'inclusive', comprehensive, wide-reaching definition, which leads Galanter to the conclusion that "generative art is as old as art itself." [4] The most important characteristic of any description (or attempted description) of generative art–in electronic music and algorithmic composition, computer graphics and animation, on the demo scene and in VJ culture, and in industrial design and architecture [5] –is that generative processes are used to negate intentionality. Generative art is only concerned with generative processes (and in turn, software or code) insofar as they allow–when viewed as a pragmatic tool that is not analysed in itself–the creation of an 'unforeseeable' result. It is for precisely this reason that the term 'generative art' is not an adequate description of software art. 'Software art', on the other hand, refers to artistic activity that enables reflection of software (and software’s cultural significance) within the medium – or material – of software. It does not regard software as a pragmatic aid that disappears behind the product it creates, but focuses on the code it contains–even if the code is not always explicitly revealed or emphasised. Software art, according to Florian Cramer, makes visible the aesthetic and political subtexts of seemingly neutral technical command sequences. Software art can base itself on a number of different levels of software: source code level, abstract algorithm level, or on the level of the product created by a given piece of code. [6] This is shown in the wide variety of different projects ranging from 'codeworks' (which consist only of ASCII code and in most cases cannot be executed) and experimental web browsers – «Webstalker» (1997) – down to executable programmes. Just as software is only one of many materials used in generative art, software art can also contain elements of generative art, but does not necessarily have to be technically generative. Thus, the terms 'generative art' and 'software art' cannot be used as synonyms under any circumstances. Rather, the two terms are used indifferent registers, as I will attempt to show in the following passage.Dragan Espenschied/ Alvar Freude

As part of their thesis «insert_coin», Dragan Espenschied and Alvar Freude secretly installed a web proxy server at the Merz Academy in Stuttgart in 2000/2001. Taking the slogan "two people controlling 250 people," the proxy server used a Perl script to manipulate both students’ and faculty members’ entire web traffic on the Academy’s computer network. The goal of this, according to Espenschied and Freude, was to "examine the users’ competence and critical faculty in terms of the every-day medium Internet." [7] This manipulated proxy server forwarded URLs entered to other pages, modified HTML formatting code, and used a simple search-and-replace function to change both news reports on news sites (by changing the names of politicians, for example) and the content of private e-mails accessed via web interfaces such as Hotmail, GMX, and Yahoo!. The manipulated web access was in place for four weeks without being noticed by students or staff at the Merz Academy, and when Espenschied and Freude revealed details of the experiment, practically no-one was interested. Although the artists published an easy-to-follow guide to disabling the filters, only a very small percentage of those affected took the time to make a simple adjustment in order to regain access to unfiltered information. [8]textz.com

My second example, «walser.php», walser.pl, and «makewalser.php» by textz.com/Project Gnutenberg, is a form of political literary [9] software, or more specifically anti-copyright activist software and was developed as a reaction to one of the largest literature scandals in Germany since 1945. The file name walser.php is not only an ironic reference to walser.pdf, an electronic version of Martin Walser’s controversial novel sent by the Suhrkamp publishing house via e-mail and later recalled; it is a php-script that takes 10,000 lines of source code and uses the php-interpreter to generate an ACSII text version of Walser’s Tod eines Kritikers (death of a critic). While the php source code does not contain the novel in visible or readable form and can thus be freelydistributed and modified under the GNU General Public License, it may only be executed with the written permission of Suhrkamp. [10] While Espenschied and Freude's experiment on filtering and censorship of Internet content points out software’s practically infinite potential to control (and be controlled), walser.php offers a practicable solution with which to handle the commercial restrictions, in particular, that seek to hinder freedom of information through digital rights management systems (DRM). Whereas insert_coin temporarily makes a dystopic scenario reality by manipulating software, textz.com with walser.php develops genuinely utopian "countermeasures in the form of software." [11] These projects are generative in the best sense of the word. And yet neither insert_coin nor walser.php perfectly fits the definitions of generative art as they are currently used in the design field. Philip Galanter, who I have already quoted and is surely among the most prolific generative art theorists at present, defines generative art as a process that contributes to the creation of a completed work of art. Celestino Soddu, Director of the Generative Design Lab at the Politecnico di Milano technical university in Milan and organiser of the "Generative Art" international conferences, also describes generative art as a tool that allows the artist or designer to synthesise "an ever-changing and unpredictable series of events, pictures, industrial objects, architectures, musical works, environments, and communications." [12] The artist could then produce "unexpected variations towards the development of a project" in order to "manage the increasing complexity of the contemporary object, space, and message." [13] And finally, the Codemuse web site also defines generative art as a process with parameters that the artist should experiment with "until the final results are aesthetically pleasing and/or in some way surprising." Generative art and generative design are–as these quotes show–mainly concerned with the results that generative processes produce. They involve software as a pragmatic-generative tool or aid with which to achieve a certain (artistic) result without questioning the software itself. The generative processes that the software controls are used primarily to avoid intentionality and produce unexpected, arbitrary and inexhaustible diversity. Both the n_Gen DesignMachine, Move Design's entry to the Helsinki Read_Me Festival 2003, and Cornelia Sollfranks’ «Net Art Generator,» [14] which has been generating net art at the touch of a button since 1999, are ironic commentaries on what is often (mis)taken for generative design. [15] insert_coin and walser.php extend beyond such definitions of generative art or generative design insofar as, in comparison to more result-orientated generative design (and also in comparison to many interactive installations of the 1990s, which hid the software in black boxes), they are more concerned with the coded processes that generate particular results or interfaces. Their focus is not on design, but on the use of software and code as culture–and on how culture is implemented in software. To this end, they develop 'experimental software', a self-contained work (or process) that deals with the technological, cultural, and social significance of software–and not only by virtue of its capacity as tool with which arbitrary interfaces are generated. In addition, the authors of 'experimental software' are rather concerned with artistic subjectivity, as their use of various private languages shows, and less with displaying machinic creativity and whatever methods were used to form it. "Code can be diaries, poetic, obscure, ironic or disruptive, defunct or impossible, it can simulate and disguise, it has rhetoric and style, it can be an attitude," [16] reads the emphatic definition from 2001 transmediale jury members Florian Cramer and Ulrike Gabriel.Software Art

The term 'software art' was first defined in 2001 by transmediale [17] , the Berlin media art festival, and introduced as one of the festival’s competition categories. [18] Software art, referred to by other authors as 'experimental' [19] and 'speculative software' [20] as well as 'non-pragmatic' and 'non-rational' [21] software, comprises projects that use program code as their main artistic material or that deal with the cultural understanding of software, according to the definition developed by the transmediale jury. Here, software code is not considered a pragmaticfunctional tool that serves the 'real' art work, but rather as a generative material consisting of machinic and social processes. Software art can be the result of an autonomous and formal creative process, but can also refer critically to existing software and the technological, cultural, or social significance of software. [22] Interestingly, the difference between software art and generative design is reminiscent of the difference between software art that was developed in the late 1990s and the early computer art of the 1960s. Artworks from the field of software art "are not art created using a computer," writes Tilman Baumgärtel in his article Experimentelle Software (experimental software), "but art that takes place in the computer; it is not software programmed by artists in order to create autonomous works of art, but software that is itself a work of art. With these programmes, it is not the result that is important, but the process triggered in the computer (and on the computer monitor) by the program code." [23] Computer art of the 1960s is close to concept art in that it privileges the concept as opposed to its realisation. However, it does not follow this idea through to its logical conclusion: its work, executed on plotters and dot- matrix-printed paper, has an emphasis on the final product and not the program or process that created the work. [24] In current software art, however, this relationship is inverse; it deals "solely with the process that is triggered by the program. While computer art of the 1960s and 1970s regarded the processes inside computers only as methods and not as works in themselves, treated computers as 'black boxes', and kept computer processes veiled inside, present software projects thematise exactly these processes, make them transparent, and put them up for discussion." [25] — see the (somewhat polemic) Comparison of generative art and software art.Performance of code vs. the fascination with the generative,

or: "Code has to do something even to do nothing, and it has to describe something even to describe nothing." [26] The current interest in software, according to my hypothesis, is not only attributable to a fascination with the generative aspect of software, that is, to its ability to (pro)create and generate, in a purely technical sense. Of interest to the authors of these projects is something that I would call the performativity of code–that is, its effectiveness in terms of speech act theory, which can be understood in more ways than just as purely technical effectiveness–that is, not only its effectiveness in the context of a closed technical system, but its effect on the domains of aesthetics, politics, and society. In contrast to generative art, software art is more concerned with 'performance' than with 'competence', more interested in parole than langue [27] –in our context, this refers to the respective actualisations and the concrete realisations and consequences in terms, for example, of societal systems and not 'only' within abstract-technical rule systems. In the two examples above, the generative is highly political–specifically because changing existing texts covertly (in the case of insert_coin) and extracting copyrighted text from a Perl script (in the case of walser.php) is interesting, not in the context of technical systems, but rather in the context of the societal systems that are becoming increasingly dependent on these technical foundations. First, however, there is the fascination with the generative potency of the code: "Codes [are] individual alphabets in the literal sense of modern mathematics […], one-to-one and countable, i.e. using sequences of symbols that are as short as possible, that are, thanks to grammar, gifted with the tremendous ability to reproduce themselves ad infinitum: Semi-Thue groups, Markov chains, Backus-Naur forms, and so on. That and only that distinguishes such modern alphabets from the one we know, the one which can analyse our languages and that has given us Homer’s epics, but that cannot set up a technical world like computer code can today." [28] Florian Cramer, Ulrike Gabriel, and John F. Simon Jr. also have a particular interest in the algorithms–"the actual code that produces what is then seen, heard, and felt." [29] For them, perhaps the most fascinating aspect of computer technology is that code–whether contained in a text file or a binary number–is machine executable: "an innocuous piece of writing can upset, reprogram , [or] crash the system." [30] In terms of its 'coded performativity', [31] program code also has direct, political consequences on the virtual space that we are increasingly occupying: Here, "code is law." [32]Program Code as Performative Text

Ultimately, the computer is not an 'image medium', as it is often described, but essentially a 'text medium', to which all sorts of audio-visual output devices can be connected. [33] Multimedia, dynamic interfaces are generated from (programmed) texts. Therefore, it is not enough to talk in terms of the "surface effects of software"–that is, dynamic data presentation through the staging of information and animation–of a "performative turn in graphical user interfaces," [34] because this view is too attached to the assumed performativity of those surfaces. Rather, when considering software art and net art projects (and indeed software in general), one should be aware that they are based on two forms of text: a phenotext and a genotext. The surface effects of phenotext, for example, moving texts, are generated and controlled by other texts—by effective texts lying under the surface, by program code, or by source texts. Program code distinguishes itself in that saying and doing come together inside it. Code is an illocutionary speech act (see below) capable of action, and not a description or representation of something, but something that affects, sets into motion, and moves" [35] In this context, Friedrich Kittler refers to the ambiguous term 'command line', a hybrid that today has been almost completely eliminated from most operating systems by graphical user interfaces. Some 20 years ago, however, all user interfaces and editors were either command line orientated, or one could switch back and forth between modes. While pressing the return key results in a line break when in text mode, entering a text and pressing the return key when in command line mode is a potential command: "In computers […], in stark contrast to Goethe’s Faust, words and acts coincide. The neat distinction that the speech act theory has made between utterance and use, between words with and without quotation marks, is no more. In the context of literary texts, kill means as much as the word signifies; in the context of the command line, however, kill does what the word signifies to running programmes or even to the system itself." [36]How To Do Things With Words

In a series of lectures held in 1955 under the title of How to Do Things with Words, [37] John Langshaw Austin (1911–1960) outlined the groundbreaking theory that linguistic utterances by no means only serve the purpose of describing a situation or stating a fact, but that they are used to commit acts. «What speakers of languages have always known and practised intuitively," writes Erika Fischer-Lichte, "was formulated for the first time by linguistics: Speech performs not only a referential function, but also a performative function.» [38] Austin’s speech act theory regards speech essentially as action and sees it as being effective not on the merit of its results, but in and of itself. This is precisely where the speech act theory meets the code’s assumed performativity: «[When] a word not only means something, but performatively generates exactly that what it names.» [39] Austin identifies three distinct linguistic acts in all speech acts. He defines the 'locutionary act' as the propositional content, which can be true or false. This act is not of further interest to us in this context. 'Illocutionary acts' are acts that are performed by the words spoken. They are defined as acts in which a person who says something also does something (for example, a judge's verdict: «I sentence you" is not a declaration of intent, but an action.) The message and execution come together: Simply "uttering [the message] is committing an act.» [40] Thus, illocutionary speech acts have certain effects and can either succeed or fail, depending on whether certain extra-linguistic conventions are fulfilled. 'Perlocutionary acts', on the other hand, are utterances that trigger a chain of effects. The speech itself and the consequences of that speech do not occur at the same time. As Judith Butler notes, the "consequences are not the same as the speech act, but rather the result or the 'aftermath' of the utterance." [41] Butler summarises the difference in a succinct formula: «While illocutionary acts take place with the help of linguistic conventions, perlocutionary acts are performed with the help of consequences. This distinction thus implies that illocutionary speech acts produce effects without delay, so that 'saying' becomes the same as 'doing' and that both take place simultaneously.rlaquo; [42] Insofar as 'saying' and 'doing' coincide, program codes can be called illocutionary speech acts. According to Austin, speech acts can also be acts, without necessarily having to be effective (that is, without having to be 'successful'). If these acts are unsuccessful, they represent failed performative utterances. Thus, speech acts are not always effective acts. "A successful performative utterance [however] isdefined in that the act is not only committed," writes Butler, "but rather that it also triggers a certain chain of effects." [43] program codes, viewed very pragmatically, are only useful as successful performative utterances; if they do not cause any effect (regardless of whether the effect is desired or not), or they are not executable, they are plain and simply redundant. In the context of functional pragmatic software, only executable code makes sense. [44]Code as a mobilisation and/or immobilisation system

Code, however, does not only have an effect on phenotexts, the graphical user interfaces. 'Coded performativity' [45] also has direct, political consequences on the virtual spaces (the Internet, for example) which we are increasingly occupying: program code, according to the U.S. law professor Lawrence Lessig, "increasingly tends to become law." [46] Today, control functions are built directly into the network architecture, that is, into its code, according to the theory that Lessig outlines in Code and other Laws of Cyberspace (1999). Using the Internet provider AOL as an example, Lessig makes insistently clear how through its very code AOL's architecture prevents all forms of virtual 'rioting'. Different code allows different levels of freedom: "The decision for a particular code is," according to Lessig, "also a decision about the innovation that the code is capable of promoting or inhibiting." [47] To this extent, code can mobilise or immobilise its users. This powerful code remains invisible, however; Graham Harwood refers to this as an "invisible shadow world of process." [48] In this sense, one could refer to the present as a 'postoptical' age in which program code—which can also be described as 'postoptical unconscious', according to Walter Benjamin—becomes "law." I developed the term 'postoptical' while dealing with the concept of the 'ctrl_space' exhibition that took place in 2000 and 2001 at the ZKM Centre for Art and Media in Karlsruhe. This exhibition, which was dedicated solely to the Bentham panoptic-visual paradigm, outlined the problematic (and here, polemically formulated) theory that surveillance today only takes place if a camera is present–which, considering the various forms of 'dataveillance' practiced today, is an outdated definition. The term 'postoptical', on the other hand,describes all digital data streams and (programmed) communication structures and architectures that can be monitored just as easily but which consist of only a small portion of visual information. [49] In his A Short History of Photography, Walter Benjamin defines the 'optical unconscious' as an unconscious visual dimension of the material world that is normally filtered out from people's social consciences, thus remaining invisible, but which can be made visible using mechanical recording techniques (such as photography and film: slow motion, zoom). In his words, «it is a different nature which speaks to the camera than speaks to the eye: so different that in place of a space consciously woven together by a man on the spot there enters a space held together unconsciously.» [50] He goes on to say that even if photographers can justify their work by capturing others in action, no matter how common that action is, they still cannot know their subjects’ behaviour at the exact moment of capture. Photography and its aids (slow motion, zoom), he claims, reveal this behaviour. They allow us to discover our optical unconscious, just as psychoanalysis allows us to discover our instinctive subconscious. With Benjamin's definition of the optical unconscious, one might today refer to the existence of a 'postoptical unconscious', usually hidden by a graphical interface in computers, which must be made visible using suitable equipment. [51] This 'postoptical unconscious' could be considered in terms of program code that surfaces/interfaces are based on and which, with its coded performativity, algorithmic genotext and deep structure, generates the surfaces/interfaces that are visible to us, while the code itself remains invisible to the human eye.Focus on an invisible performativity

Many artistic and net activist projects that have dealt with the politics of electronic data space (such as the Internet) since the late 1990s aim specifically at code and seek to remove the transparency of these technical structures. Artists and net activists have drawn attention to the existence of the hotly contested data sphere on the Internet (Toywar platform), built private ECHELON systems (Makrolab by Projekt Atol/Marko Peljhan), developed tools to blur one’s own traces on the Internet (Tracenoizer. by LAN), and thematised the increasing restriction of public space through the privatisation of telecommunications infrastructure (Minds of Concern: Breaking News [52] by Knowbotic Research. ). [53] While the everyday understanding of 'transparency' is usually clearness and controllability through visibility, it means exactly the opposite in IT, namely that something can be seen through, can be invisible, and that information is hidden. If a system (for example, a surface or graphical user interface) is 'transparent', it is neither recognisable or perceptible to the user. Although information hiding is often useful in terms of reducing complexity, it can also lend the user a false sense of security, as it suggests a direct view of something through its invisibility, a transparency disturbed by nothing, which one would be absurd to believe: "Far from being a transparent window into the data inside a computer, the interface brings with it strong messages of its own." [54] In order to make these messages visible, one has to direct one’s attention to the 'transparent window'. Just as transparent glass-fronted buildings can be transformed into milky, semi-transparent surfaces in order to become visible [55] , postoptical IT structures must also be removed of their transparency. In communications networks, similarly, the structures of economic, political, societal power distribution must be made opaque and thus visible. Ultimately, it is a question of returning the computer science-based definition of transparency (that is, the transparency of the interface: information hiding) to its original meaning–clearness and controllability through visibility. [56]Codeworks: "M @ z k ! n 3 n . k u n z t . m2cht . fr3!"

Works in the area of software art, therefore, focus on the code itself, even if this is not always explicitly revealed or emphasised. Software art draws attention to the aesthetic and political subtexts of ostensibly neutral technical command sequences. That which is surely the "most radical understanding of computer code as artistic material" [57] can be seen in the so-called 'codeworks' [58] and their artistic (literary) examination of program code. Codeworks use formal ASCII instruction code and its aesthetics without referring back to the surfaces and multimedia graphicaluser interfaces it creates. Works by Graham Harwood, Netochka Nezvanova, and mez [59] introduced in this context bring to mind the existence of a 'postoptical unconscious' that is usually hidden by the graphical interface. The Australian net artist "mez" [60] (Mary-Anne Breeze) and the anonymous net identity Netochka Nezvanova (also known under the pseudonyms nn, antiorp and Integer) have been producing for some time in addition to hypertext works and software that allows real-time manipulation of video, also text works that they usually send to mailing lists such as Nettime, Spectre, Rhizome, 7-11 or Syndicate in the form of simple e-mails. Except for attachments and the increasing use of HTML texts, e-mail as a text medium allows the use of ASCII text only and is therefore (technologically) restricted. mez and antiorp, however, have both developed their own languages and styles of writing: mez calls her style 'M[ez]ang.elle' while Netochka Nezvanova/Integer refers to it as 'Kroperom' or 'KROP3ROM|A9FF'. Both deal with the artistic appropriation of program code. Those not familiar with programming languages cannot recognise much more than illegible noise in this contemporary form of mail art, which gives the impression of a file that has been incorrectly and illegibly formatted or decoded by a software program. Those who are semi-literate in the domain of programming languages and source code, however, will soon realise that this is computer code and programming language that are being used and appropriated. The status of these languages, or parts of language, however, remains ambivalent: It oscillates in the perception of the recipient between assumed executability (functionality) and non-executability (dysfunctionality) of the code; in short, between significant information and asignificant noise. Depending on the context, useless character strings suddenly become interpretable and executable commands, or vice versa—performative programming code becomes redundant data.mez

mez’s self-created art writing style , 'M[ez]ang.elle' is modelled on computer languages […] but without being written in strict command syntax. [61] It contains a mixture of ASCII art and pseudo-program code. Like the portmanteau words of Lewis Carroll and James Joyce," writes Florian Cramer, "the words of mezangelle interweave in double and multiple ways. The square brackets originate from programming language and are borrowed from common notation of Boolean algebra in that they reference several characters simultaneously, thus describing polysemy." [62] mez lets individual words physically become crossover points of different meanings–here, we are dealing with material ambiguity or polysemy implemented into linear text or, to echo Lacan, with the realised "vertical" of a point, [63] that is the simultaneous presence of different potentialities within the same word: «mez introduces the hypertext principle of multiplicity into the word itself. Rather than produce alternative trajectories through the text on the hypertext principle of 'choice', here they co-exist within the same textual space.» [64] mez’s texts enter into an endless shifting of meaning that generally cannot be specifically defined. This polysemy is, as noted by Florian Cramer, also a polysemy of the sexes, as is found in many of mez's texts: "'fe[male]tus' can be simultaneously read as 'foetus,' 'female,' and 'male.' Other words take on the syntax of file names and directory trees as well as the quoting conventions of e-mail and chats." [65]Netochka Nezvanova

'M @ z k ! n 3 n . k u n z t . m2cht . fr3!', taken out of Netochka Nezvanova a.k.a. Integer's signature, stands for "Maschinenkunst macht frei" (machine art brings freedom). Integer became well-known in 1998 for bombarding mailing lists with e-mails that, at first glance, appeared to contain only illegible noise, that is, she deliberately entered a form of noise into human (tele)communication. On second glance, however, it can be seen that the e-mail contains a mixture of human and machine language. Integer calls his language 'Kroperom'. It is distinctive in that the phonetic system of the Latin alphabet is replaced by the 256 characters of the American Code for Information Interchange (ASCII), the lingua franca of computer culture. For example, in the Kroperom word 'm9d', the phonetic value 'ine' is replaced by 'nine'. This language makes use of more than just phonetic substitution, however. In 'm@zk!n3n kunzt', the '@' replaces the 'a', the 'zk' replaces the 'sch' sound, the exclamation mark replaces the 'i' and the '3' the letter 'e'. Characters are also replaced according to both visual similarities ('!' for 'i') and visual and phonetic analogies ('3' or 'three' for'e'). The human language - in this case, a mixture of German and English—is interspersed and infiltrated with characters and computer code metaphors. In addition, the Kroperom text receives an emphatic quality through its extensive use of exclamation marks (which stems simply from the frequent occurrence of the letter 'i' in the German language) and transforms the executable computer commands into oppressive, sometimes amusing, human commands: "Do this! Do that!" The reader has to make use of various strategies in order to decipher these from the script consisting of letters of the alphabet, numbers, and ASCII characters. This impedes and destabilises the reading process and triggers different associations. On this point, Josephine Barry writes: "The act of reading becomes pointedly self-reflexive and, in terms of chaos theory, nonlinear experience with each word representing a junction of multiple systems." [66] The question of whether the Kroperom text, which is very similar to an executable program code, can be compiled at another location in the computer and become machine-readable, capable to run, and thus executable, remains unanswered.Performativity and Totality of Genotexts

Whether or not Jodi's walkmonster_start () e-mail is executable code is also questionable. It is perhaps more the knowledge of potential executability and performativity of code that plays a role here, and not so much the current technical execution. Geoff Cox, Alex McLean, and Adrian Ward, however, argue that "the aesthetic value of code lies in its execution, not simply in its written form." [67] While this statement must be accepted for insert_coin and walser.php, as their controversy (and perhaps even their poetry) lies in their technical execution, this definition must be relativised in terms of the structure of codeworks. The poetry of codeworks lies not only in their textual form, but rather in the knowledge that they have the potential to be executed. This leads us to the question of whether formal program code can have an audience outside the machine that it addresses. Can formal code be performative without the machine that implements and executes it? I agree with Florian Cramer, who contests that "machine language [is] only machine readable": "It is important to keep in mind that computer code, and computer programmes, are not machine creations and machines talking to themselves, but written by humans." [68] It is trivial to observe that manmade computer code can also be read by other humans or translated back into human language. People were able to go through the entire battle scenario of walkmonster_start () as soon as they received the e-mail, without compiling it beforehand. The generative aspect of 'codeworks' should therefore be emphasised (and the definition of the generative broadened), as code is not only executable in technical environments, but in a wider sense, it can also become productive in the reader. In these projects, the human language is infiltrated with mechanical control codes and algorithms–similar to the heretical technique of speaking in tongues or the Surrealist écriture automatique (automatic writing), both techniques that seek to deactivate consciousness (putting one into a trance or state of sleep) in order to give voice to the divine or the unconscious. In contrast to the Surrealist theory that freeing the unconscious leads to social revolution, the creation of a half human, half machinic, hybrid language would appear partly fervent and partly compulsive. If not evidence of a seizure of power, the convulsive appearance of the 'postoptical unconscious' is at least one more sign that this is not about speaking, but that we are being 'spoken', as per Lacanian theory. Favouring program code over surfaces/interfaces, genotext over phenotext, and poiesis over aisthesis leads to a liberating effect in the ASCII works and codeworks by mez, Jodi and Netochka Nezvanova insofar as the focus on 'postoptical unconscious' allows for the removal of deception; it removes the delusion that, or rather allows one to take leave of the belief, for example, that even today, surveillance can only take place when a camera is present. Codeworks draw our attention towards the increasing codedness and programmedness of our media environment. These works use the poor man’s medium, text, which also appears performative or executable in the context of the command line. By working specifically with this ambivalence of simplicity and totality of execution, they warn of the potential totalitarian dimension of algorithmic genotext.Translation by Dr. Donald Kiraly

++++++++++++++++++++++++++++++++++++++++++

[1] Slogan for the program Web Stalker (1997) by the London artists group I/O/D.

Gepostet von

Nina Wenhart ...

hh:mm

7:58 PM

0

replies

tags -

code art,

generative art,

text

![]()

Bookmark this post:blogger tutorials

Social Bookmarking Blogger Widget |

2008-10-02

>> Herbert Franke, "Computer Graphics, Computer Art", 1971

"The works from computers nowadays covered by the term 'comoputer art' are in my opinion among the most remarkable products of our time:

"The works from computers nowadays covered by the term 'comoputer art' are in my opinion among the most remarkable products of our time:

not because they s

urpass, or even approach, the beauty of traditional forms of art, but because they place established ideas of beauty and art in question;

not because they are intrinsically satisfactory or ebven finished, but because their very unfinished form indicates the great potential for future development;

not because the resolve problems, but because they raise and expose them.

Computer art [...] expresses the progress taking place in computer science."

(to be continued)

Gepostet von

Nina Wenhart ...

hh:mm

9:17 PM

0

replies

tags -

computer graphics,

publications,

quotes

![]()

Bookmark this post:blogger tutorials

Social Bookmarking Blogger Widget |

>> Virtual Reality (VR) + CAVE

Virtual Reality in Art:

Virtual Reality in Art:

(from wikipedia: http://en.wikipedia.org/wiki/Virtual_reality)

"David Em was the first fine artist to create navigable virtual worlds in the 1970s. His early work was done on mainframes at III, JPL and Cal Tech. Jeffrey Shaw explored the potential of VR in fine arts with early works like Legible City (1989), Virtual Museum (1991), Golden Calf(1994).

Canadian artist Char Davies created immersive VR art pieces Osmose (1995) and Ephémère (1998). Maurice Benayoun's work introduced metaphorical, philosophical or political content, combining VR, network, generation and intelligent agents, in works like Is God Flat (1994), The Tunnel under the Atlantic (1995), World Skin (1997).

Other pioneering artists working in VR have include Luc Courchesne, Rita Addison, Knowbotic Research, Rebecca Allen, Perry Hoberman, Jacki Morie, and Brenda Laurel."

Other pioneering artists working in VR have include Luc Courchesne, Rita Addison, Knowbotic Research, Rebecca Allen, Perry Hoberman, Jacki Morie, and Brenda Laurel."

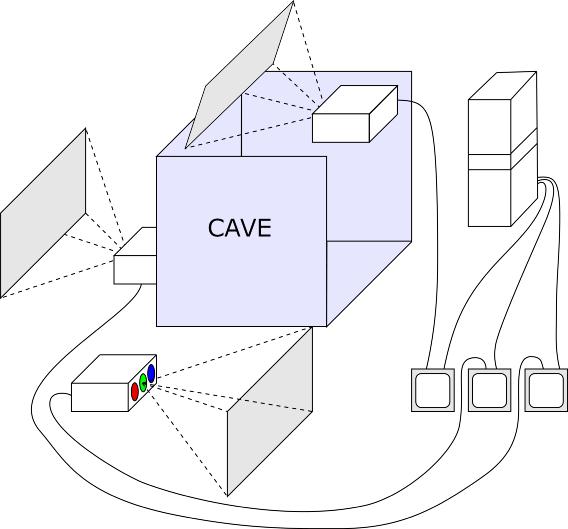

CAVE: a 3d immersive virtual environment, developed by Dan Sandin and Tom DeFanti at the Electronic Visualization Lab (EVL), Chicago:

http://en.wikipedia.org/wiki/Cave_Automatic_Virtual_Environment

some CAVEs:

some CAVEs:@ EVL: http://www.evl.uic.edu/core.php?mod=4&type=1&indi=161

and http://www.evl.uic.edu/pape/CAVE/

@ Ars Electronica: http://www.aec.at/en/center/current_exhibition_detail.asp?iProjectID=11197

@ SAIC: http://bcchang.com/vrlab/index.php

some devices used in the CAVE:

head mounted display:

http://en.wikipedia.org/wiki/Head_mounted_display

data glove:

http://en.wikipedia.org/wiki/Data_glove

wand:

tracks the user and his/her interactions with and movements in the virtual environment.

what is...

... an immersive environment:

http://en.wikipedia.org/wiki/Immersive_environment

... augmented reality:

http://en.wikipedia.org/wiki/Augmented_reality

personalities important for the development of VR:

- Myron Krueger:

http://en.wikipedia.org/wiki/Myron_Krueger

- Dan Sandin:

http://en.wikipedia.org/wiki/Dan_Sandin

- Tom Defanti:

http://en.wikipedia.org/wiki/Thomas_A._DeFanti

- Scott Fisher:

http://en.wikipedia.org/wiki/Scott_Fisher_(technologist)

- Jaron Lanier:

http://en.wikipedia.org/wiki/Jaron_Lanier

- Marvin Minsky:

http://en.wikipedia.org/wiki/Marvin_minsky

- Timothy Leary:

By the mid 1980s, Leary had begun to incorporate computers, the Internet, and virtual reality into his aegis of thought. Leary established one of the earliest sites on the World Wide Web, and was often quoted describing the Internet as "the LSD of the 1990s."[citation needed] He became a promoter of virtual reality systems,[13] and sometimes demonstrated a prototype of the Mattel Power Glove as part of his lectures (as in From Psychedelics to Cybernetics). Around this time he cultivated friendships with a number of notable people in the field, including Brenda Laurel, a pioneering researcher in virtual environments and human-computer interaction.

http://en.wikipedia.org/wiki/Timothy_Leary#Leary.27s_last_two_decades

another VR-environment: the Immersa Desk

http://www.evl.uic.edu/core.php?mod=4&type=1&indi=163

some Art Projects done for the CAVE:

"Crayoland", one of the first and most successful CAVE-visualizations. Created by Dave OPape in 1995, 2d-crayon drawings serve as textures for the objects in this 3d-environment.

"CAVE", a project by Austrian artist Peter Kogler in collaboration with the Ars Electronica Futurelab, was realized in 1999. As a visitor, you could navigate through Kogler's abstract shapes.

"World Skin" is an award-winning CAVE-project created by French artists Maurice Benayoun and Jean-Baptiste Barrière. What was special about it was the novel approach to the user's interaction with the envoronment. The wand served as a photocamera. When you took a photo of the scenes around you, the object was washed out, leaving a blank space.

"Gesichtsraum" by Johannes Deutsch is anouther example for a collaboration of an artist with Ars Electronica's Futurelab: Deutsch and the programmers and 3d modellers worked together closely for a period of several months to develop an immersive environment out of Johannes Deutsch's paintings of his son.

Gepostet von

Nina Wenhart ...

hh:mm

8:46 PM

2

replies

tags -

AR,

artists,

augmented reality,

CAVE,

media art,

virtual reality,

VR

![]()

Bookmark this post:blogger tutorials

Social Bookmarking Blogger Widget |

>> tron (movie), 1982

http://en.wikipedia.org/wiki/Tron_(film)

to watch the movie online, see:

http://static.youku.com/v1.0.0330/v/swf/qplayer.swf?VideoIDS=XMzUxMDU5NTY&embedid=-&showAd=0

or here:

Gepostet von

Nina Wenhart ...

hh:mm

8:40 PM

0

replies

tags -

computer animation,

movie

![]()

Bookmark this post:blogger tutorials

Social Bookmarking Blogger Widget |

2008-10-01

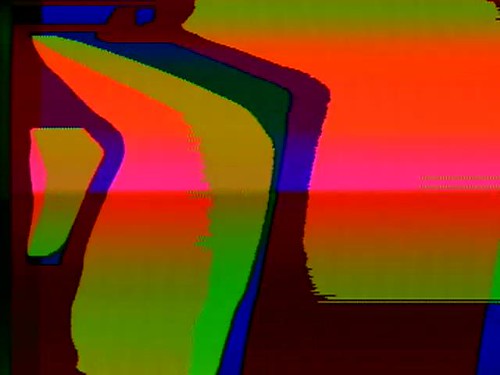

>> Sandin Image Processor + Distribution Religion

from: http://www.evl.uic.edu/core.php?mod=4&type=1&indi=337

Between 1971 and 1973, Dan Sandin designed and built the Sandin Image Processor (IP) a patch programmable analog computer for real-time manipulation of video inputs through the control of the grey level information. The version that allowed for color manipulation was refered to as the Color IP

Between 1971 and 1973, Dan Sandin designed and built the Sandin Image Processor (IP) a patch programmable analog computer for real-time manipulation of video inputs through the control of the grey level information. The version that allowed for color manipulation was refered to as the Color IP

This modular design was based on the Moog synthesizer and is often explained as the "video equivalent of a Moog audio synthesizer" or as a video synthesizer. That is, it accepted basic video signals and mixed and modified them in a fashion similar to what a Moog synthesizer did with audio. An analog, modular, real time, video processing instrument, it provided video processing performance levels and produced subtle and delicate video effects that became popular with early video artists.

The IP's real-time effects naturally led to its use in live theater performance, including "Electronic Visualization Events" where the IP was seen processing the output of Tom DeFanti's Graphics Symbiosis System - GRASS. Real-time image processing was combined with sound to create visual concerts.

Physically, an Image Processor system would be built out of modules. Several types of modules were defined and typically would be an aluminum box containing a circuit board inside, video connectors and knobs on front of box and power connector on back of box.

The modules would be organized in rows. Individual systems could vary in size and increase in power with the addition of more modules. Typical modules would be signal sources, combiners and modifiers, effects modules, sync, color encoder, color decoder, and NTSC video interface.

Sandin was an advocate of education and espoused a non-commercial philosophy, emphasizing a public access to processing methods and the machines that assist in generating the images.

Accordingly, he placed the circuit board layouts for the IP with a commercial circuit board company and freely published schematics and other documentation. The Do It Yourself ethos combined with the low cost of the parts and a free dissemination of information created a large following of video artists, students, and others interested in experimental video electronics. The modules were often assembled by individuals who had no prior knowledge of electronics fabrication. Also, from time to time Sandin and staff would hold fix-it parties where modules that had failed to work would be repaired by the senior staff.

The Image Processor's educational success can be found in its numbers. In its time, more IP's were built than any other commercial "video-art" synthesizer. This distribution method was, and to a very large extent still is, unique in the proprietary and competitive industrial field of advanced electronics.

Sandin's IP, and the instructional video that accompanied the modules trained and inspired numerous individuals who would go on to make substantial contributions to both art and science.

Sandin received grants in support of his work from the Rockefeller Foundation (1981), the National Science Foundation, the National Endowment for the Arts (1980) and the Guggenheim Foundation (1978). Sandin's early IP video work "Spiral PTL" was one of the first pieces included in the Museum of Modern Art's video art collection.

for current images done with the IP, see on satrom's flickr-site: http://www.flickr.com/photos/jonsatrom/sets/72157602017681573/

for current images done with the IP, see on satrom's flickr-site: http://www.flickr.com/photos/jonsatrom/sets/72157602017681573/

The construction-instrucions for Sandin Image Processor were distributed freely, so anyone who wanted to have one could build it. This example of open source was called "Distribution Religion" or "copy it right". For further information see http://mediaarthistories.blogspot.com/2008/06/dan-sandin-demonstrates-sandin-image.html. To see and download the Distribution Religion, visit: http://criticalartware.net/rsrc/dwnl/dS_DISTREL.dwnl/www_VR/

Gepostet von

Nina Wenhart ...

hh:mm

12:26 AM

0

replies

tags -

art + technology,

early new media,

open source,

technology,

video art,

video synthesizer

![]()

Bookmark this post:blogger tutorials

Social Bookmarking Blogger Widget |

>> collaborations of artists and engineers, some quotes

>> Leslie Mezei, “Collaboration and multimedia are not impossible, only extremely hard and rarely successful.”

>> Billy Klüver, “The raison d'etre of E.A.T. is the possibility of a work which is not the preconception of either the engineer or the artist, but is the result of the exploration of the human interaction between them."

>> Lilian Schwartz, „The associative properties once used by the non-computer artist no longer correspond to the direct will of the artist“ → simple acts become major programming tasks; mastery of the medium

>> Gene Youngblood: "the ultimate computer will be the sublime aesthetic device: a parapsychological instrument for the direct projection of thoughts and emotions.”

>> A.Michael Noll, “"Most certainly the computer is an electronic device capable of performing only those operations that it has been explicitly instructed to perform.”

>> Jasia Reichardt, “Noll is one of the few people involved in computer art from the technological end who has always claimed that the roles of the artist and the engineer are not only not interchangeable, but beyond making his techniques available and accessible, the engineer had no role in the area of creative activity generally called art.” (in: “The Computer in Art”)

>> Leslie Mezei, “The first wave of artists that came to the computer expected miracles from it without a serious effort of learning and exploring and creation on their part."

>> Herbert Franke: “A new generation of computer artists comes on the stage - Many of them have collaborated in the technical development, but also use their medium for free artistical performance. So they are entitled to call themselves artists as well as technicians.”

>> A.Michael Noll in “Expanded Cinema”, 1970:

“First of all, artists in general find it extremely difficult to verbalize the images and ideas they have in their minds.”

>> “Klüver's wish was to find 'new means of expressions for artists... and to find out where they stood in relation to the society that was sending men to the moon.'”

>> Gene Youngblood: “man so far has used the computer as a modified version of older, more traditional media.” [...] “But the chisel, brush, and canvas are passive media whereas the computer is an active participant in the creative process.”

>> Gene Youngblood: "Most certainly the computer [...] has been explicitly instructed to perform.”

>> Gene Youngblood: "For the first time, the artist is in a position to deal directly with fundamental scientific concepts of the twentieth century.”

>> Gene Youngblood, “When that occurs we will find that a new kind of art has resulted from the interface. Just as a new language is evolving from the binary elements of computers rather than the subject-predicate relation of the Indo-European system, so will a new aesthetic discipline that bears little resemblance to previous notions of art and the creative process. Already the image of the artist has changed radically.

In the new conceptual art, it is the artist's idea and not his technical ability in manipulating media that is important. Though much emphasis currently is placed on collaboration between artists and technologists, the real trend is more toward one man who is both artistically and technologically conversant.”

Billy Klüver, "It is hard to think of two professions with such great dissimilarities as the artists and the scientists. … I feel like a man standing with one leg each on an icefloat [sic]. The icefloats are drifting apart and I will end up with the fish. C.P. Snow cornered the market with his “two cultures.” Art and technology, art and science are not two cultures, they are two separate worlds speaking two entirely different languages" (Loewen 1975: 41).

summary of keywords:

--> language, new language, translation, conversant in both art and technology (Youngblood, Franke); human-computer interaction; human-human interaction (Klüver, Knowlton), conceptual art, art as research; direct will, deal directly (Schwartz, Youngblood); miracles, no serious learning efforts (Mezei, Noll, Knowlton); collaboration hardly ever successfull (Mezei, Knowlton) vs equal partners (Klüver)

Gepostet von

Nina Wenhart ...

hh:mm

12:03 AM

0

replies

tags -

artists,

collaboration,

engineers,

quotes

![]()

Bookmark this post:blogger tutorials

Social Bookmarking Blogger Widget |

>> additional class blogs

>> labels

- Alan Turing (2)

- animation (1)

- AR (1)

- archive (14)

- Ars Electronica (4)

- art (3)

- art + technology (6)

- art + science (1)

- artificial intelligence (2)

- artificial life (3)

- artistic molecules (1)

- artistic software (21)

- artists (68)

- ASCII-Art (1)

- atom (2)

- atomium (1)

- audiofiles (4)

- augmented reality (1)

- Baby (1)

- basics (1)

- body (1)

- catalogue (3)

- CAVE (1)

- code art (23)

- cold war (2)

- collaboration (7)

- collection (1)

- computer (12)

- computer animation (15)

- computer graphics (22)

- computer history (21)

- computer programming language (11)

- computer sculpture (2)

- concept art (3)

- conceptual art (2)

- concrete poetry (1)

- conference (3)

- conferences (2)

- copy-it-right (1)

- Critical Theory (5)

- culture industry (1)

- culture jamming (1)

- curating (2)

- cut up (3)

- cybernetic art (9)

- cybernetics (10)

- cyberpunk (1)

- cyberspace (1)

- Cyborg (4)

- data mining (1)

- data visualization (1)

- definitions (7)

- dictionary (2)

- dream machine (1)

- E.A.T. (2)

- early exhibitions (22)

- early new media (42)

- engineers (3)

- exhibitions (14)

- expanded cinema (1)

- experimental music (2)

- female artists and digital media (1)

- festivals (3)

- film (3)

- fluxus (1)

- for alyor (1)

- full text (1)

- game (1)

- generative art (4)

- genetic art (3)

- glitch (1)

- glossary (1)

- GPS (1)

- graffiti (2)

- GUI (1)

- hackers and painters (1)

- hacking (7)

- hacktivism (5)

- HCI (2)

- history (23)

- hypermedia (1)

- hypertext (2)

- information theory (3)

- instructions (2)

- interactive art (9)

- internet (10)

- interview (3)

- kinetic sculpture (2)

- Labs (8)

- lecture (1)

- list (1)

- live visuals (1)

- magic (1)

- Manchester Mark 1 (1)

- manifesto (6)

- mapping (1)

- media (2)

- media archeology (1)

- media art (38)

- media theory (1)

- minimalism (3)

- mother of all demos (1)

- mouse (1)

- movie (1)

- musical scores (1)

- netart (10)

- open source (6)

- open source - against (1)

- open space (2)

- particle systems (2)

- Paul Graham (1)

- performance (4)

- phonesthesia (1)

- playlist (1)

- poetry (2)

- politics (1)

- processing (4)

- programming (9)

- projects (1)

- psychogeography (2)

- publications (36)

- quotes (2)

- radio art (1)

- re:place (1)

- real time (1)

- review (2)

- ridiculous (1)

- rotten + forgotten (8)

- sandin image processor (1)

- scientific visualization (2)

- screen-based (4)

- siggraph (1)

- situationists (4)

- slide projector (1)

- slit scan (1)

- software (5)

- software studies (2)

- Stewart Brand (1)

- surveillance (3)

- systems (1)

- tactical media (11)

- tagging (2)

- technique (1)

- technology (2)

- telecommunication (4)

- telematic art (4)

- text (58)

- theory (23)

- timeline (4)

- Turing Test (1)

- ubiquitious computing (1)

- unabomber (1)

- video (14)

- video art (5)

- video synthesizer (1)

- virtual reality (7)

- visual music (6)

- VR (1)

- Walter Benjamin (1)

- wearable computing (2)

- what is? (1)

- William's Tube (1)

- world fair (5)

- world machine (1)

- Xerox PARC (2)

>> cloudy with a chance of tags

followers

.........

- Nina Wenhart ...

- ... is a Media Art historian and researcher. She holds a PhD from the University of Art and Design Linz where she works as an associate professor. Her PhD-thesis is on "Speculative Archiving and Digital Art", focusing on facial recognition and algorithmic bias. Her Master Thesis "The Grammar of New Media" was on Descriptive Metadata for Media Arts. For many years, she has been working in the field of archiving/documenting Media Art, recently at the Ludwig Boltzmann Institute for Media.Art.Research and before as the head of the Ars Electronica Futurelab's videostudio, where she created their archives and primarily worked with the archival material. She was teaching the Prehystories of New Media Class at the School of the Art Institute of Chicago (SAIC) and in the Media Art Histories program at the Danube University Krems.